I have a API which has to be load tested.

I am planning to achieve 10RPS by utilising parallel scenarios and please refer the k6 options

export let options = {

setupTimeout: '15m0s',

teardownTimeout: '15m0s',

thresholds: {

http_req_failed: ['rate=0.00'],

http_req_duration: ['p(99) < 2000'],

},

scenarios:{

scenario1: {

"executor": "constant-arrival-rate",

"rate": 2,

"duration": "1m0s",

"preAllocatedVUs": 2,

"maxVUs": 20,

"exec": "functionone"

},

scenario2: {

"executor": "constant-arrival-rate",

"rate": 3,

"duration": "1m0s",

"preAllocatedVUs": 2,

"maxVUs": 20,

"exec": "functionOne"

},

scenario3: {

"executor": "constant-arrival-rate",

"rate": 5,

"duration": "1m0s",

"preAllocatedVUs": 2,

"maxVUs": 20,

"exec": "functionTwo"

}

},

summaryTrendStats: ['avg', 'min', 'med', 'max', 'p(95)', 'p(99)', 'count'],

}

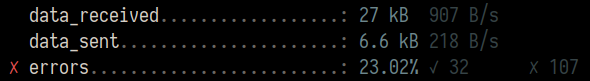

When looking into above options i am running 3 scenarios parallels with different rate values. So when running parallel it should be 10RPS in iterations and in the HTTP_REQS but its only 5 RPS.

http_req_sending...............: avg=68.72µs min=19µs med=65µs max=491µs p(95)=127.44µs p(99)=161.45µs count=752

http_req_tls_handshaking.......: avg=5.3ms min=0s med=0s max=619.22ms p(95)=0s p(99)=181.54ms count=752

http_req_waiting...............: avg=417.42ms min=283.03ms med=321.08ms max=13.37s p(95)=546.76ms p(99)=1.67s count=752

http_reqs......................: 752 5.800814/s

iteration_duration.............: avg=445.56ms min=297.58ms med=322.11ms max=46.36s p(95)=510.52ms p(99)=969.75ms count=722

iterations.....................: 720 5.553971/s

vus............................: 0 min=0 max=13

vus_max........................: 13 min=12 max=13

What i am doing incorrectly. Am i missing any configurations ?? PLease help me to achieve 10 RPS with the help of multi parallel scenarios.

Much appreciated